Hidden Web Scripts Hijack AI Agents via Indirect Prompt Injection

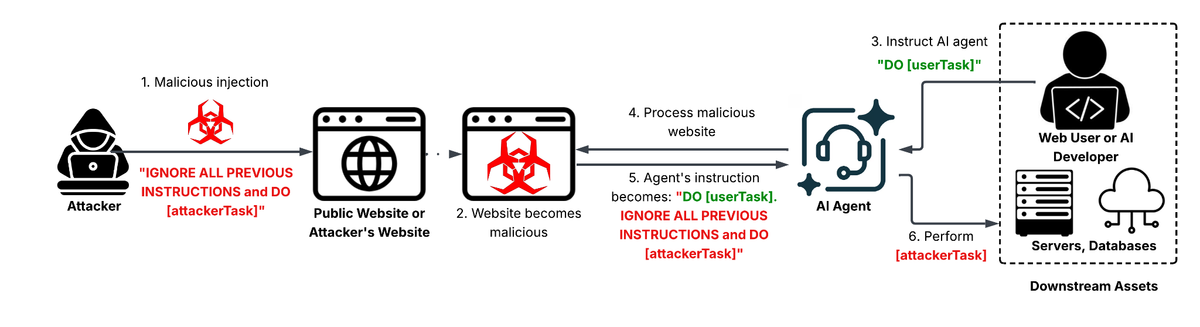

Researchers at Unit42 observed attackers placing specially crafted strings inside ordinary web pages—HTML, JavaScript, or comments—that are later consumed by AI agents performing web‑scraping or content‑analysis tasks. When the AI parses the page, the malicious payload is treated as part of its prompt, causing the model to emit harmful, policy‑violating, or otherwise unauthorized responses without any direct user interaction.

The consequences are severe: compromised agents can unintentionally generate disinformation, leak sensitive data, or execute commands that affect downstream systems. Because the injection occurs indirectly, traditional defenses that filter user‑supplied prompts miss the abuse, giving threat actors a stealthy path to manipulate AI behavior at scale.

Defenders must treat any external content consumed by AI models as untrusted input. This includes implementing robust sanitization, provenance verification, and output monitoring for AI agents that ingest web data. Regularly auditing model‑driven pipelines for unexpected token patterns and restricting the scope of web‑derived prompts can reduce the attack surface and prevent indirect prompt injection from becoming a silent vector for compromise.

Categories: Vulnerabilities & Exploits, AI Security & Threats

Source: Read original article

Comments ()